How We Give Claude Code Long-Term Memory with Reusable Skills

Three building blocks close the gap between a capable AI and a reliable team member: skills that encode one task precisely, rules files that capture conventions once, and templates that show Claude what good output looks like.

Read More ›

Stop Maintaining Tests by Hand: Causify's Open-Source pytest Framework

Three things that turn test maintenance from a growing chore into a non-event: golden files for expected output, automatic reproducibility across machines, and speed-enforced test tiers.

Read More ›

Monitoring Airflow on Kubernetes: A Production Stack

Running Airflow on Kubernetes gives you two monitoring layers that do not talk to each other: Kubernetes knows whether the container is alive, and Airflow knows whether pipelines are working. This post describes the production stack we built to bridge that gap — StatsD, a metric exporter, Prometheus, Alertmanager, and Grafana — with a focus on what each component does and why it is designed the way it is.

Read More ›

Brute Force vs Structure: The Coming Compute War in AI

The next contest in AI may not simply be model versus model, or even open versus closed. It may be brute force versus structure: systems that spend ever more compute rediscovering the world from tokens, versus systems that learn reusable mechanisms and reason with them directly.

Read More ›

How Causify Is Coming Up for Air

Better work does not come from doing more. It comes from building systems that reduce friction, improve clarity, and let teams focus on what matters.

Read More ›

The Causal Tile Engine: A New Layer in the AI Stack

If foundation models are becoming the universal reasoning substrate, something important is still missing in the middle: an engine for storing, composing, updating, and serving reusable causal mechanisms.

Read More ›

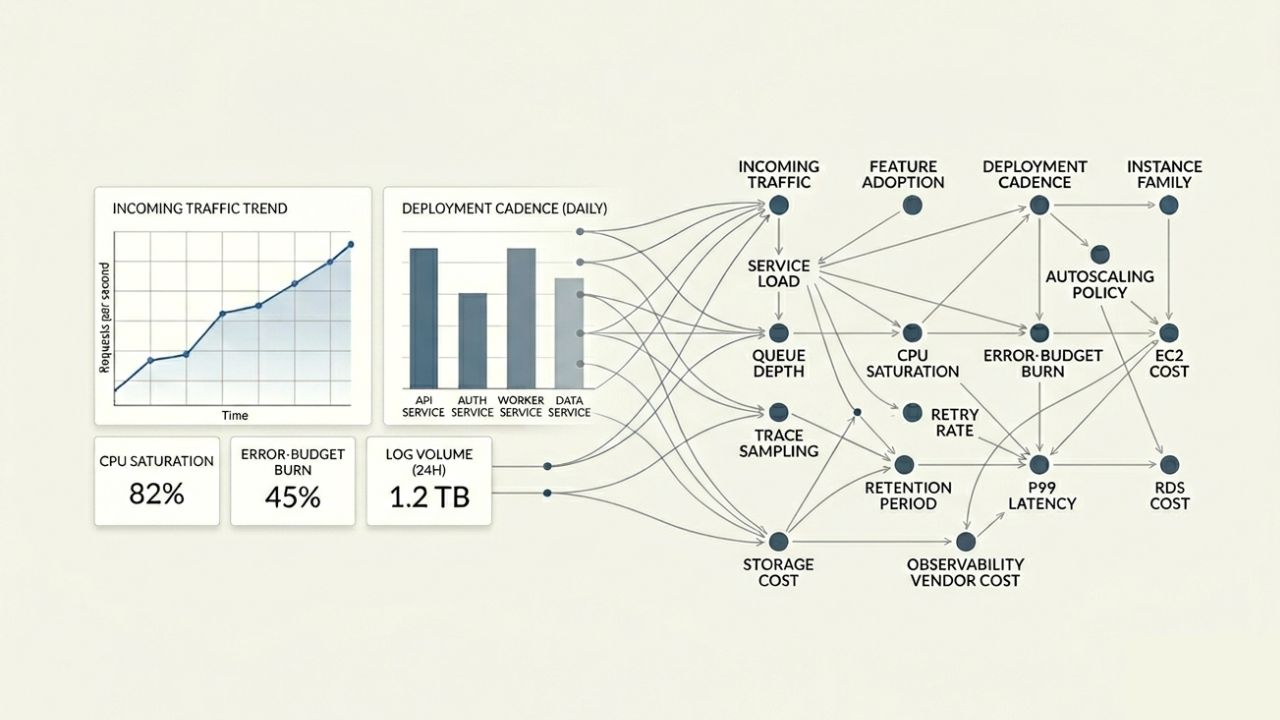

From Dashboards to Decisions: Why Observability and FinOps Need Causal Graphs

A beautiful dashboard is not a decision system. Observability and FinOps tools are excellent at showing what happened, but teams still struggle to answer what caused it, what tradeoff matters, and what will happen if they change the wrong thing. Causal graphs turn cloud cost and performance from reporting into steering.

Read More ›

The Causal Cache: Why Enterprise Copilots Keep Relearning the Same Company

Enterprise copilots keep rediscovering what drives revenue, churn, latency, and cost as though each question were the first time anyone had asked it. A causal cache offers a different path: persistent, reusable reasoning about how a company actually works.

Read More ›

Beyond Tokens: Why LLMs Need Reusable Chunks of Reasoning

Language models are brilliant at working with tokens, but many real-world decision problems are built from recurring mechanisms, not fresh strings. The next leap may come from reusable, causal chunks of reasoning that sit above tokens rather than below them.

Read More ›

Causal Advantage: Why Reusable Reasoning Will Separate the Winners from the Experiments

The next advantage in AI will not come only from bigger models. It will come from systems that remember, reuse, and compound reasoning over time.

Read More ›

Rethinking Airflow Monitoring for a Kubernetes-Native World

Moving Airflow to Kubernetes exposed the limits of our existing monitoring. Static, agent-based approaches struggled in a dynamic system. We needed something that adapted automatically, reduced operational overhead, and gave better visibility into workflows.

Read More ›

Causal Tiling: Stop Paying for the Same Reasoning Twice

Modern AI keeps rediscovering the same structure, the same relationships, and the same explanations. Causal tiling offers a way to reuse reasoning the way cloud systems reuse infrastructure.

Read More ›

Why trust is becoming critical for enterprise AI systems

Enterprise AI adoption stalls not because models underperform, but because organizations cannot verify, audit, or control them. Trust — built through compliance standards like SOC 2, continuous monitoring, and clear access controls — is no longer optional. It is the baseline requirement that determines whether AI moves from experimentation into production.

Read More ›

Causify Achieves SOC 2 Type II Compliance

Causify is now SOC 2 Type II compliant, independently validating that our causal AI platform meets enterprise standards for Security, Availability, and Confidentiality.

Read More ›

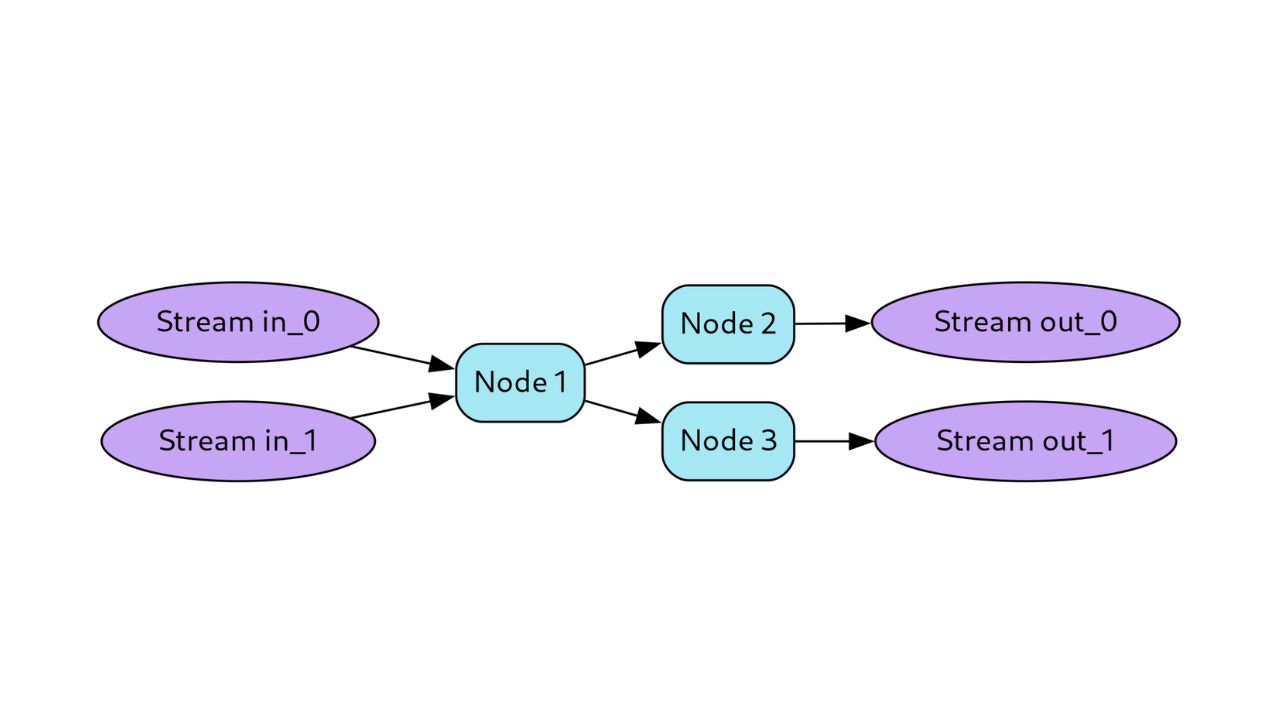

Causify DataFlow: A Framework For High-performance Machine Learning Stream Computing

DataFlow is a computational framework for simulating causal models on time series data using a directed acyclic graph architecture enhanced with knowledge time semantics for temporal causal reasoning.

Read More ›

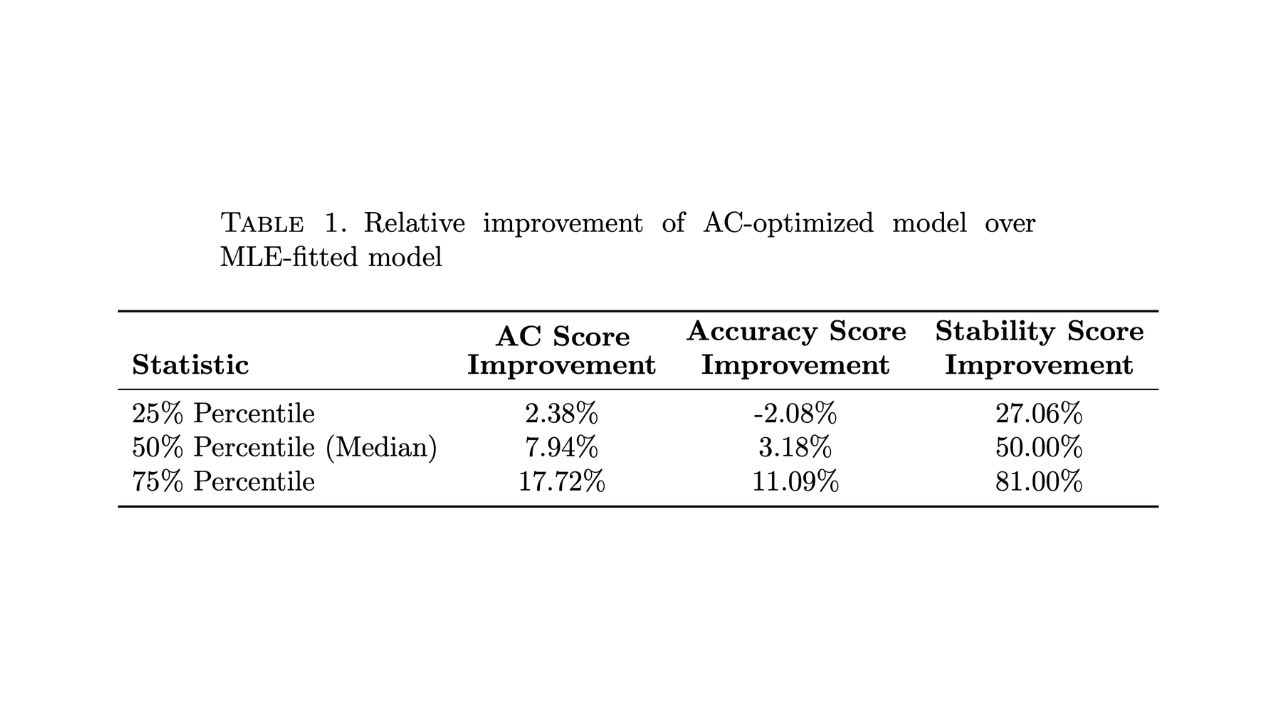

Beyond Accuracy: A Stability-Aware Metric for Multi-Horizon Forecasting

Traditional forecasting models optimize only for accuracy, ignoring an important issue: predictions that fluctuate significantly from day to day undermine confidence in production. This paper introduces the AC score metric that balances accuracy and temporal stability, achieving 91% reduction in forecast volatility while improving multi-step prediction accuracy by up to 26%.

Read More ›

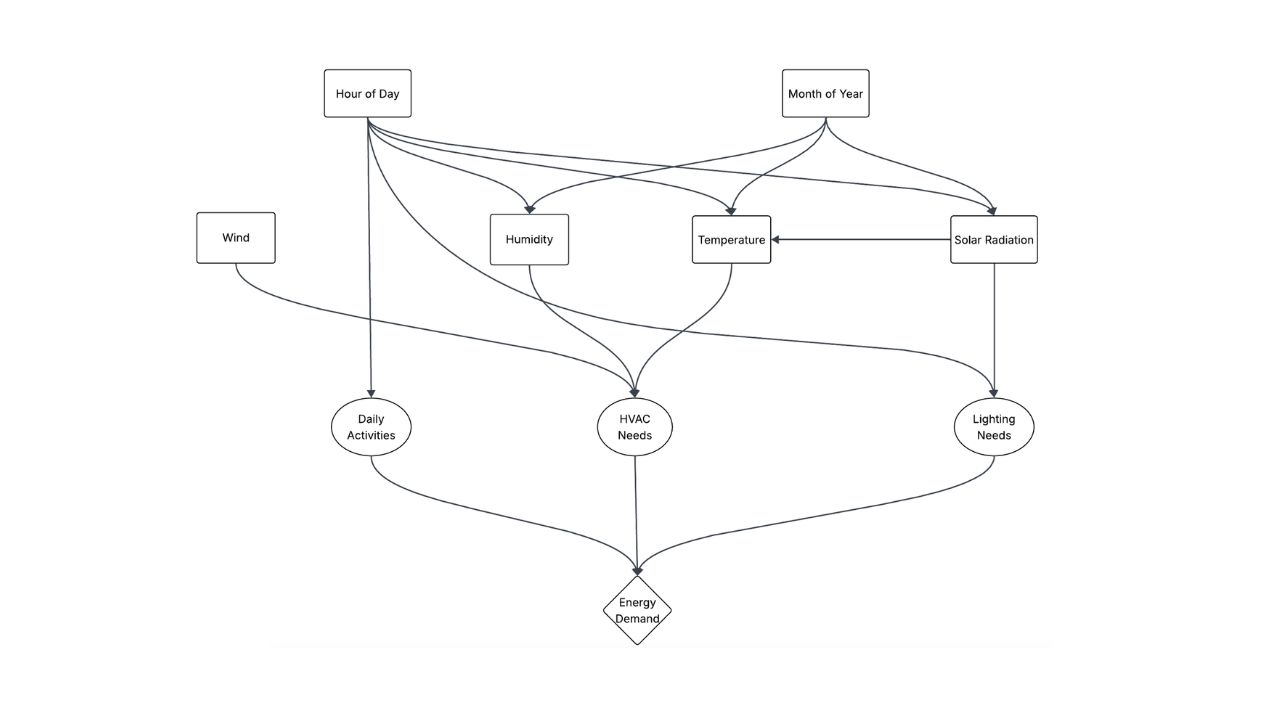

Causal Inference in Energy Demand Prediction

Structural causal models outperform traditional energy forecasts by revealing critical interdependencies correlation-based approaches fail to capture.

Read More ›

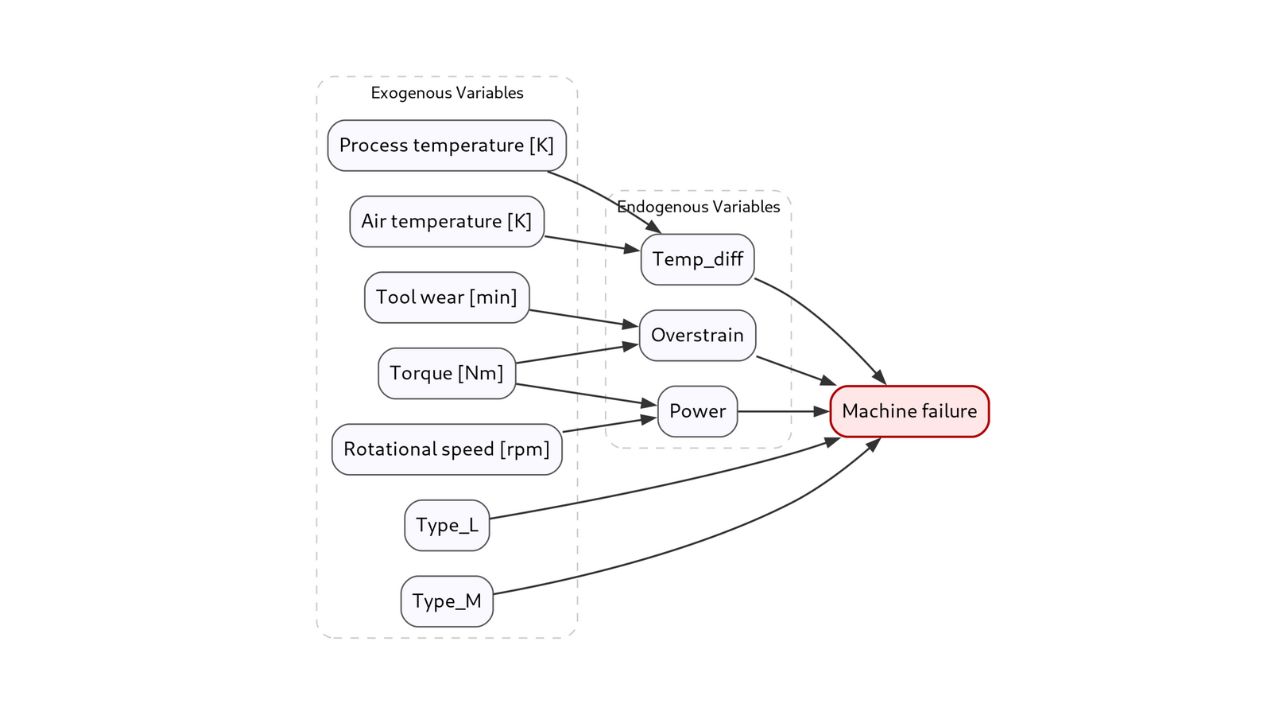

A Benchmark of Causal vs Correlation AI for Predictive Maintenance

Causal AI achieved $49,500 annual economic advantage over best ML baseline with 93.9% recall through explicit modeling of failure mechanisms rather than relying on statistical correlations.

Read More ›

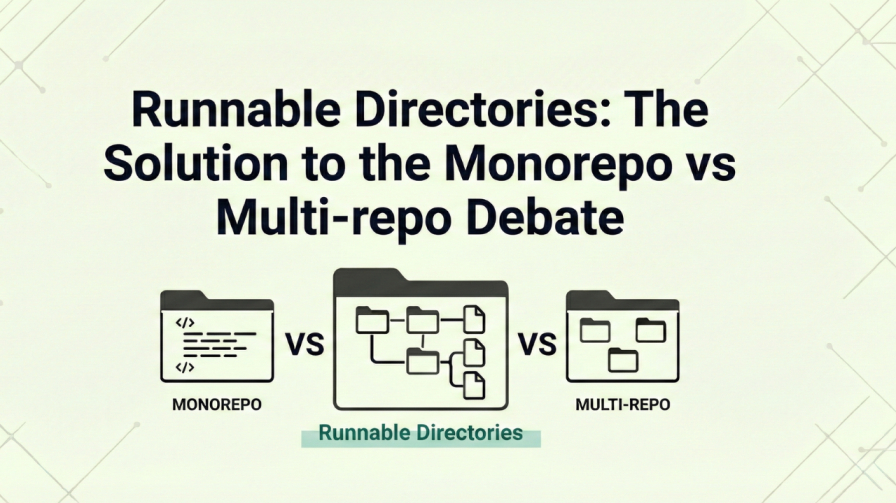

A Look at Runnable Directories: The Solution to the Monorepo vs Multi-repo Debate

A runnable directory is a hybrid approach to code organization that combines the best of monorepos and multi-repos by making each directory self-contained, buildable, testable, and deployable.

Read More ›

Causal AI: The Next Generation of Predictive Maintenance

Benchmark study on 10,000 CNC machines shows causal AI delivers $80K more annual savings than traditional ML while reducing false alarms by 97%.

Read More ›

How We Ask for Feedback at Causify

Feedback can be toxic; clarity and kindness are the antidotes to frustration and wasted time.

Read More ›

Your Data Isn't as Ready as Your Slide Deck Says

Most AI projects fail because the data is bad: inconsistent, low-quality, unowned, and held together by hope, cron, and a spreadsheet named `final_v7_really_final.xlsx`.

Read More ›

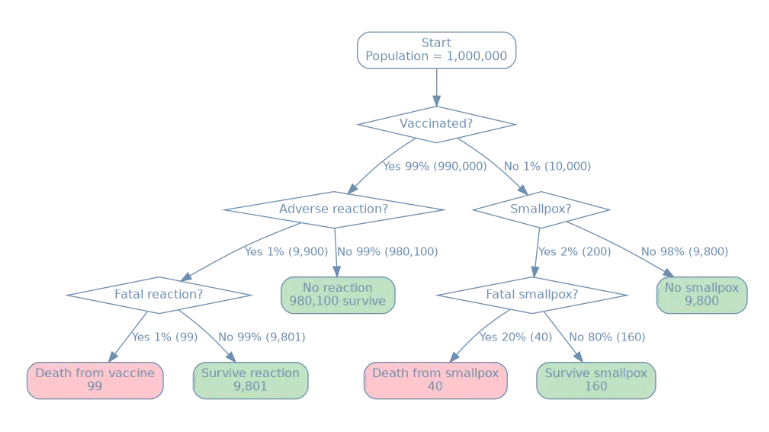

A Causal Analysis of 'Vaccine Kills' Claim

When analyzing the 'Vaccine kills more than disease', looking only at raw counts is a classic human mistake. Causal counterfactuals make the policy choice clear.

Read More ›

Data Is Dumb (And That's Why Causality Matters)

AI learns patterns, not reasons. Without causality, your model is just an expensive correlation machine.

Read More ›

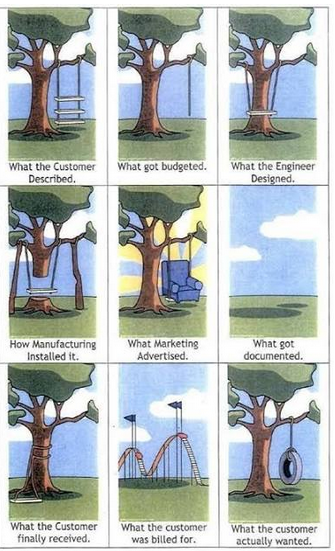

Do We Have This Feature?

Your customers don't know what they want. Build 80% solutions and adapt when reality hits.

Read More ›

Quote of the Day: AI Has Broken Wright’s Law

The future favors data masters; wisdom beats experience in the AI-driven era.

Read More ›

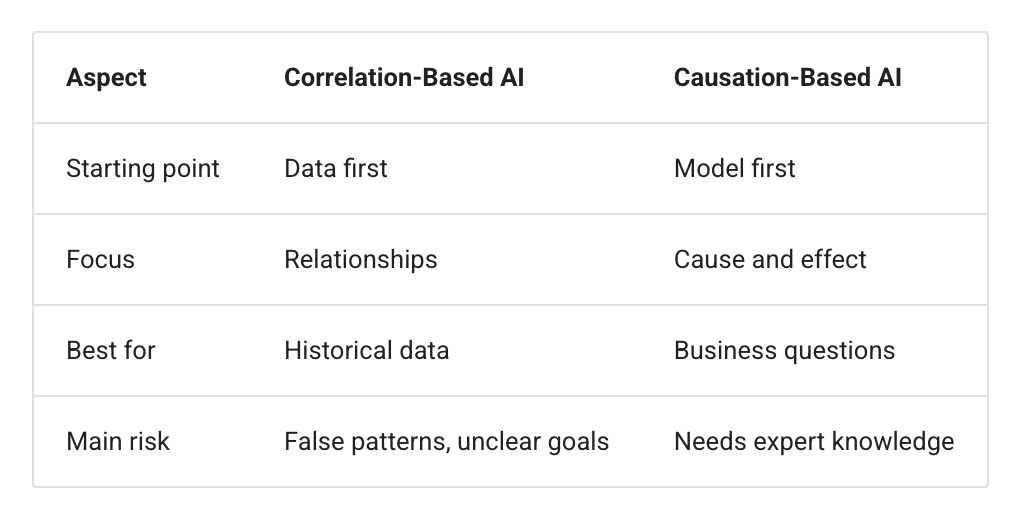

Causal ELI5: Correlation vs Causal Models

Traditional AI thinks umbrellas cause rain. Causal AI understands the world. Which one do you think is best?

Read More ›

The Future is Causal

Pattern recognition hit its ceiling. Enterprises betting on correlations will lose the next decade.

Read More ›

Correlation is not Causation: Wind Turbine Edition

Machine learning can't fix wind turbines—it mistakes symptoms for causes. Causal AI targets root problems.

Read More ›

Why FAANG Are Betting on Causal AI

Microsoft, Meta, Netflix proved causal AI works at scale. Still using correlations? You're making amateur-hour decisions.

Read More ›

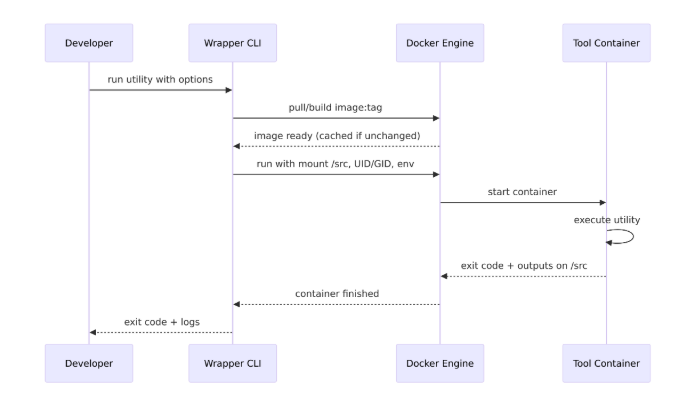

Docker Executables: No More Install Guides

Stop wasting hours on dependency hell. Package each tool once, run it everywhere, forever.

Read More ›

What's the ETA?

Engineers hate ETAs but demand them as managers. Your butt on the line cures ETA allergy fast.

Read More ›

Causal AI is the Next Step of Predictive Analytics

Traditional AI predicts outcomes but can't explain why. Causal AI finally answers 'what should we do?'

Read More ›

Why Causal AI is the Future of Automated Decision-Making

Traditional AI is blind: it predicts outcomes but can't explain why or tell you what to do.

Read More ›

Cracking the Long Tail of Data Science Problems

Big data is easy. Small, noisy data is where ML actually fails, and where real money gets made.

Read More ›

AI for Optimal Decision-Making

Your gut instinct is killing profits: AI should make decisions for you, not just predict outcomes.

Read More ›

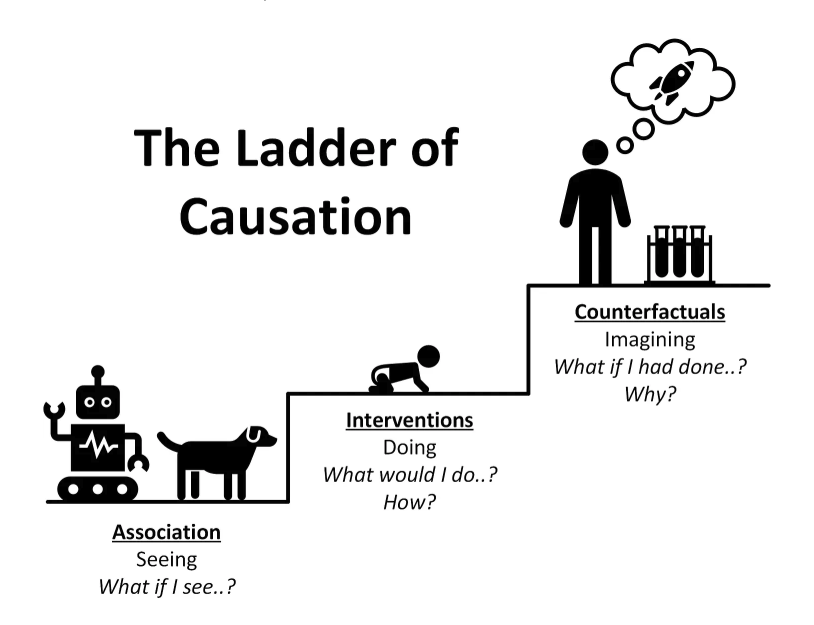

Causal ELI5: Ladder of Causality

Your AI can predict everything but understands nothing without climbing the causality ladder.

Read More ›

From Theory to Billions: How Causal AI Became Enterprise Infrastructure

Correlation-based ML is dead. Causal AI delivers 72% cost cuts while competitors waste millions on wrong insights.

Read More ›

Causal News: Causal AI Market Research

Prediction without causation is guessing. Causal AI separates smart decisions from lucky correlations.

Read More ›