Monitoring Airflow on Kubernetes: A Production Stack

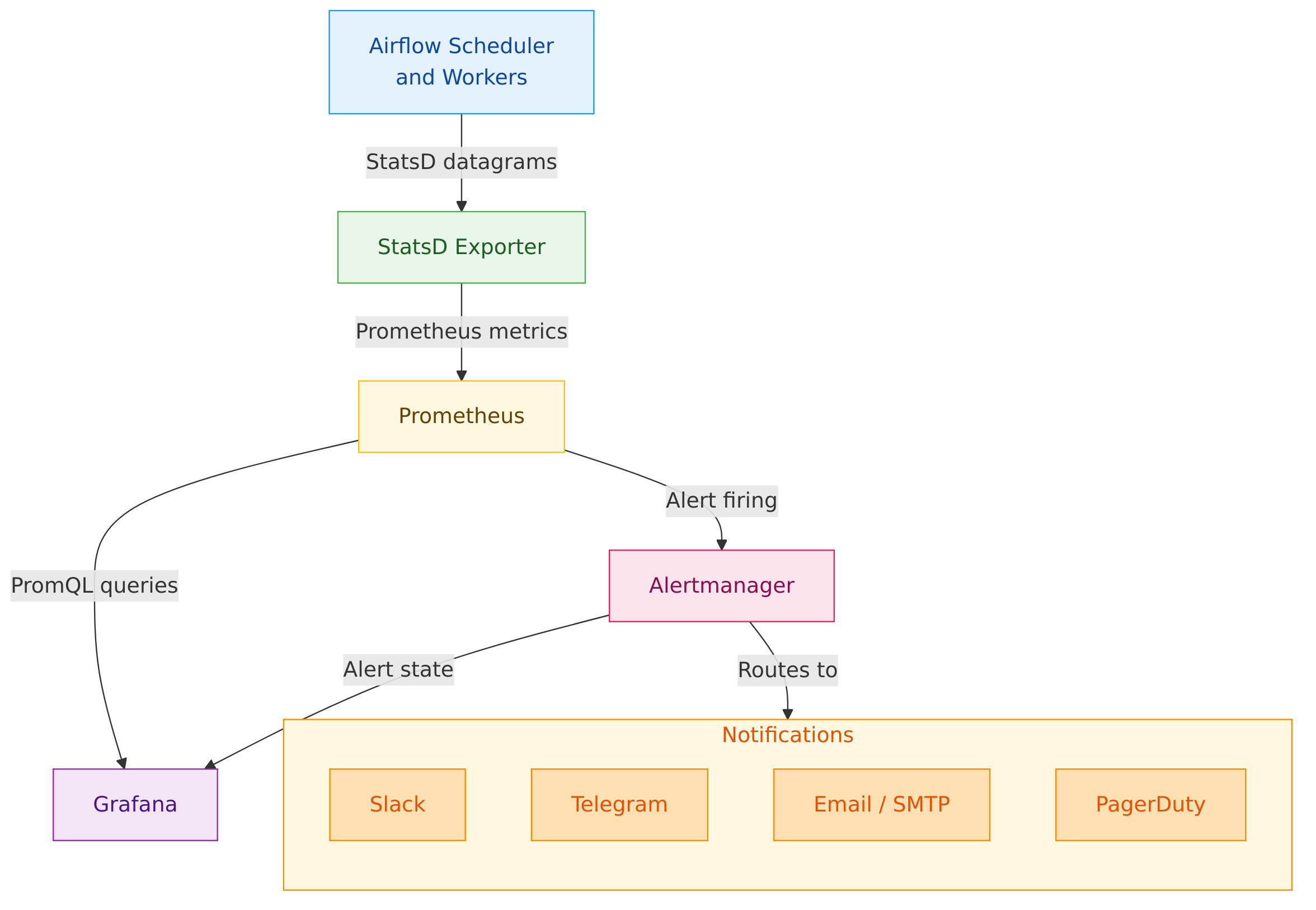

Kubernetes monitoring tells you whether Airflow is running. It does not tell you whether your pipelines are working. This post walks through the five-component stack we built to close that gap: Airflow StatsD metrics, a StatsD Exporter, Prometheus, Alertmanager, and Grafana, covering what each component does, how they connect, and the operational decisions behind the design.

If a pipeline silently stops running overnight, how long before your team finds out?

In many systems, the answer is: longer than it should be. That delay points to a gap in your monitoring.

This post describes the stack we built to close that gap for Airflow on Kubernetes. It covers the problem, the architecture, what each component does, and the decisions that shaped the design.

The Problem#

When you run Airflow on Kubernetes, you get two layers of monitoring to think about.

The first layer is Kubernetes itself. Kubernetes knows whether your application is running. It checks if the container is alive. It tracks how much CPU and memory each application is using. It restarts containers that crash.

The second layer is Airflow. Airflow knows whether your pipelines are working. It tracks whether the scheduler is dispatching jobs. It knows whether tasks are succeeding or failing. It knows whether your DAGs are being parsed correctly.

The problem is that these two layers do not talk to each other. Kubernetes can see the container. It cannot see what is happening inside Airflow.

Here is a concrete example. Imagine the Airflow scheduler gets stuck and stops sending tasks to workers. From Kubernetes' perspective, everything is fine. The container is running. It is using normal amounts of memory and CPU. No errors. No restarts. But from Airflow's perspective, no pipeline has run for the last 30 minutes.

The Architecture#

Below is the architecture of the monitoring stack we implemented. This system connects Airflow’s internal telemetry to a modern observability pipeline:

- Airflow emits metrics via StatsD, a lightweight UDP protocol designed for continuous, low-overhead instrumentation.

- A StatsD Exporter translates those metrics into a format Prometheus understands.

- Prometheus stores the data, evaluates alert rules, and forwards firing alerts to Alertmanager.

- Alertmanager processes those alerts and routes notifications to the appropriate teams and channels.

- Grafana provides interactive dashboards for both real-time monitoring and post-incident investigation.

|"StatsD datagrams"| B -->

|"Prometheus metrics"| C -->

|"PromQL queries"| D -->

|"Alert firing"| E -->

|"Alert state"| D -->

|"Routes to"| F -->

Component 1: Airflow's StatsD#

Airflow's StatsD integration is what makes the rest of the stack possible. When enabled, the scheduler continuously publishes its internal state as lightweight UDP messages. No external agents or code changes are required.

The signals that matter most for operational visibility:

-

Scheduler heartbeat: The scheduler emits a heartbeat on every cycle. An absent heartbeat is the earliest and most reliable signal that something is wrong. When the heartbeat disappears, no DAGs are being scheduled. This is a critical signal because it covers the failure mode that Airflow's own notifications cannot cover: the scheduler being down entirely.

-

Task outcomes: Airflow reports success and failure counts continuously. Monitoring the rate of change rather than the absolute count filters out the baseline noise of expected failures and surfaces the spikes that indicate real problems.

-

Executor and pool capacity: The executor reports open and occupied slot counts. Resource pools report open, queued, and running slots. These metrics show whether the system has headroom or is approaching its limits, and they give early warning before capacity becomes a hard blocker.

-

DAG processing health: The scheduler reports how many DAG files fail to parse and how long parsing takes. A DAG with an import error disappears from the scheduler without any visible symptom. Without this signal, the failure mode is invisible until someone notices a pipeline has not run.

-

Schedule delay: The gap between a DAG's scheduled start time and its actual start time is a leading indicator of capacity pressure. It begins rising before the executor saturates, giving a window to respond proactively.

Component 2: StatsD Exporter#

Prometheus is pull-based. It queries its targets on a schedule. Airflow's StatsD integration is push-based. It sends metrics as it generates them and does not wait for a response. These two models are incompatible without a bridge.

The StatsD Exporter serves as that bridge. It receives Airflow's UDP datagrams, applies a mapping configuration, and exposes the result as a Prometheus-compatible HTTP endpoint.

The mapping step is the most important design detail in this component. Airflow encodes context in its metric names directly: DAG ID, task ID, and pool name are concatenated into the metric string. Without transformation, each pipeline and task produces a unique metric name. You cannot aggregate across DAGs, filter by task type, or write alert rules that apply system-wide.

The mapping configuration extracts those embedded dimensions and converts them into Prometheus labels. The result is a structured, multi-dimensional dataset. You can ask questions like "which DAGs have the highest failure rate?" or "how has average task duration trended this week?" These queries are not possible against unlabeled metrics.

This transformation happens at ingestion. Keeping the data well-structured at the source keeps everything downstream simple: alert rules, dashboard queries, and ad-hoc investigation all work with clean labeled data.

Component 3: Prometheus#

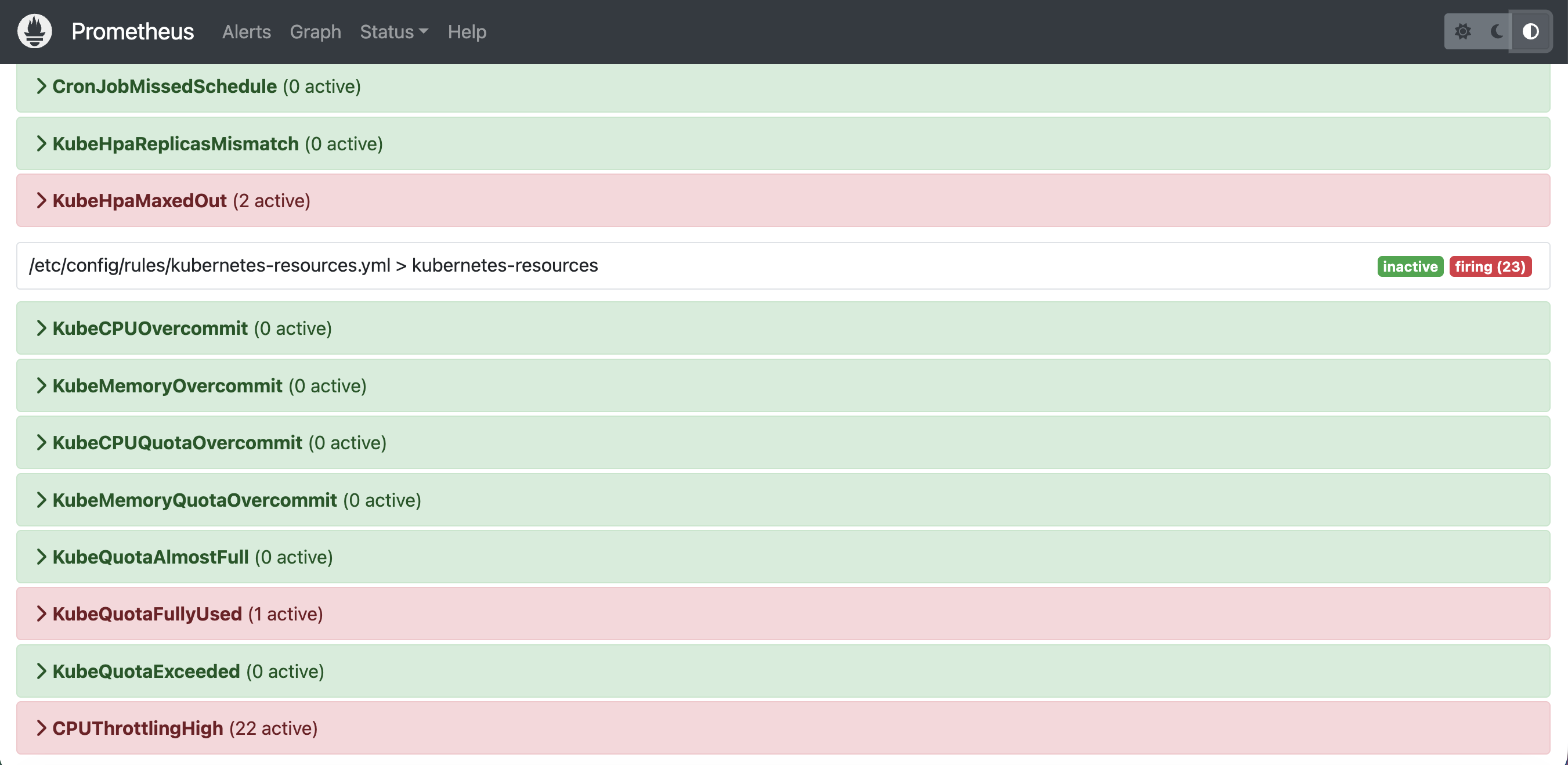

Prometheus does three things: collects metrics from all configured sources, stores them as time series, and evaluates alert rules continuously.

-

Collection. Rather than maintaining a static target list, Prometheus uses Kubernetes service discovery. It queries the Kubernetes API for annotated pods and services and updates its scrape targets automatically. New exporters are discovered without manual configuration changes.

Beyond the StatsD Exporter, Prometheus scrapes three supporting exporters that provide the infrastructure view Airflow itself cannot see:

- Node Exporter runs as a DaemonSet on every node. It provides host-level signals: CPU, memory, disk I/O, and network throughput. This layer is important for distinguishing Airflow problems from infrastructure problems. A task failure caused by a node running out of memory looks like an application failure without this layer.

- Kube-State-Metrics exposes Kubernetes object state as metrics: pod phases, deployment replica counts, job completion status. This is the view of the system as Kubernetes understands it, complementing what the application itself reports.

- Kubernetes Event Exporter converts ephemeral Kubernetes events into durable time-series data. Kubernetes retains events for only a short time. The exporter makes OOMKills, pod evictions, and scheduling failures available for retrospective analysis long after the events themselves are gone.

-

Storage. Prometheus stores metrics as time series on local disk, indexed by metric name and label set. Each data point is a timestamp and a value. This append-only model makes range queries and rate calculations efficient, which is what most Airflow alert rules depend on: not raw values, but how those values have changed over time.

-

Alert evaluation. Prometheus evaluates alert rules continuously against stored data. Each rule encodes a specific failure condition, a duration it must persist before firing, and labels for severity and routing. Prometheus sends firing alerts to Alertmanager.

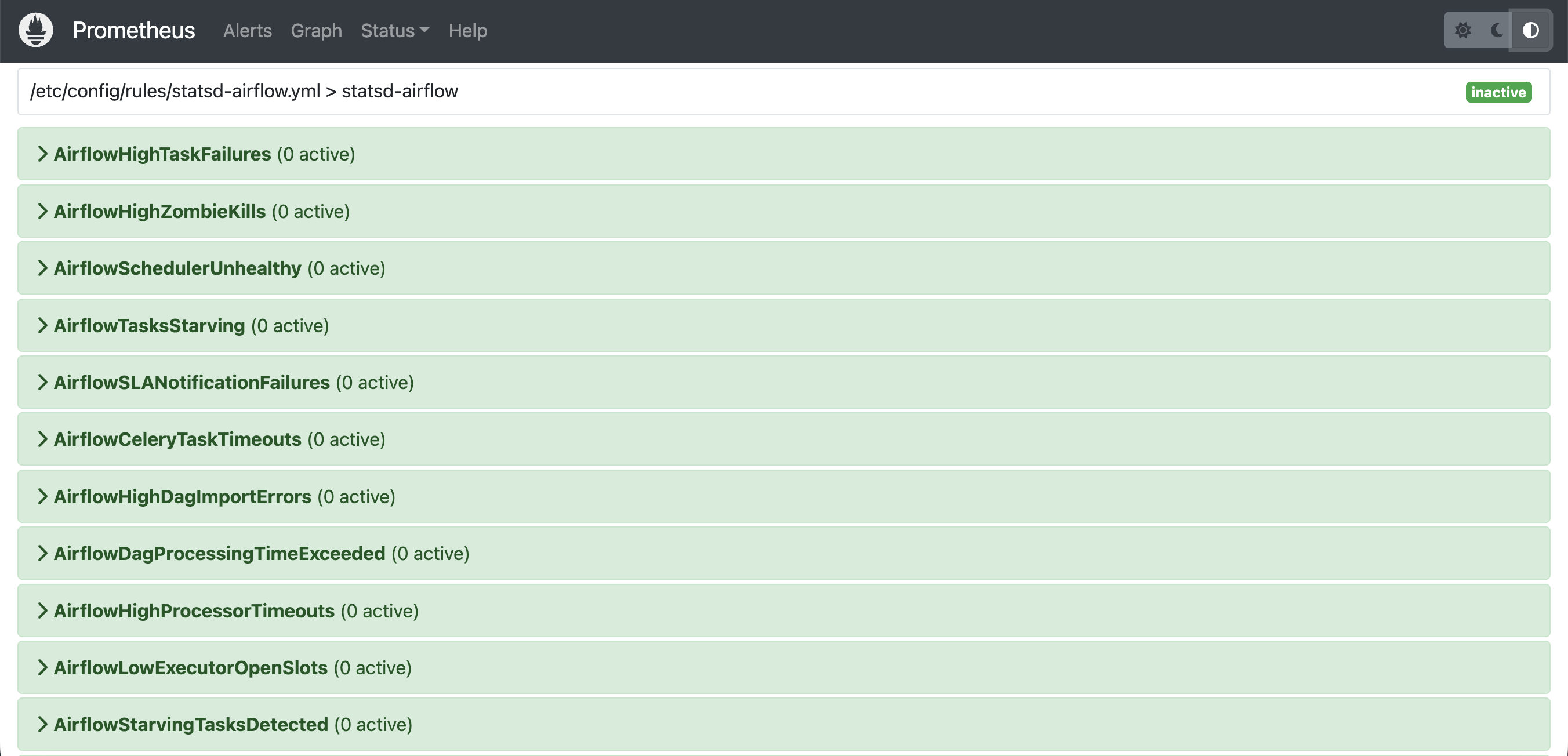

We maintain Airflow-specific rules for the failure modes that produce no signal at the Kubernetes layer:

- Scheduler health: whether the scheduler heartbeat is present and consistent.

- Task failure rates: spikes in failure counts beyond the expected baseline.

- Executor and pool saturation: slots approaching capacity before they become a hard blocker.

- DAG parse errors: files that fail to import and silently disappear from the scheduler.

- Scheduling delays: the gap between a DAG's scheduled start and its actual start, as a leading indicator of capacity pressure.

Two design principles guided the rule set:

- Every rule should be actionable. If a rule fires and the correct response is to wait and see, the threshold needs adjustment.

- Every threshold should reflect actual system behavior. Rules calibrated against production baselines have significantly lower false positive rates than rules set arbitrarily.

Component 4: Alertmanager#

Prometheus detects problems. Alertmanager is responsible for getting that information to the right people without creating noise that causes teams to tune out.

-

Routing by team ownership. Airflow operational alerts are the responsibility of data engineering. Infrastructure alerts are the responsibility of platform engineering. Mixing these streams means both teams receive alerts outside their ownership, which creates friction and trains engineers to skim notifications rather than respond to them. Alertmanager routes on alert labels so each team receives only what they own.

-

Inhibition for cascading failures. A single root cause often produces multiple downstream alerts. When the scheduler stops, no tasks are dispatched, workers go idle, and pipelines fall behind schedule. Without inhibition, one incident generates a burst of notifications about its own symptoms. Inhibition rules suppress downstream alerts when a root-cause alert is active, so the on-call engineer receives one notification about the real problem. Alert fatigue is a monitoring failure as serious as missing coverage.

-

Notification pacing. Alertmanager waits briefly after the first alert in a group fires before sending, allowing related alerts to be bundled into a single notification. For ongoing problems, it repeats at a configured interval long enough to avoid interrupting an engineer actively working the problem, but short enough that an unresolved issue does not get forgotten.

-

Environment-specific configuration. Preprod uses all notification channels intentionally. Engineers should encounter alert format, tone, and routing behavior in a low-stakes environment before a production incident requires an immediate response. Production uses a more focused channel set to keep noise low.

Component 5: Grafana#

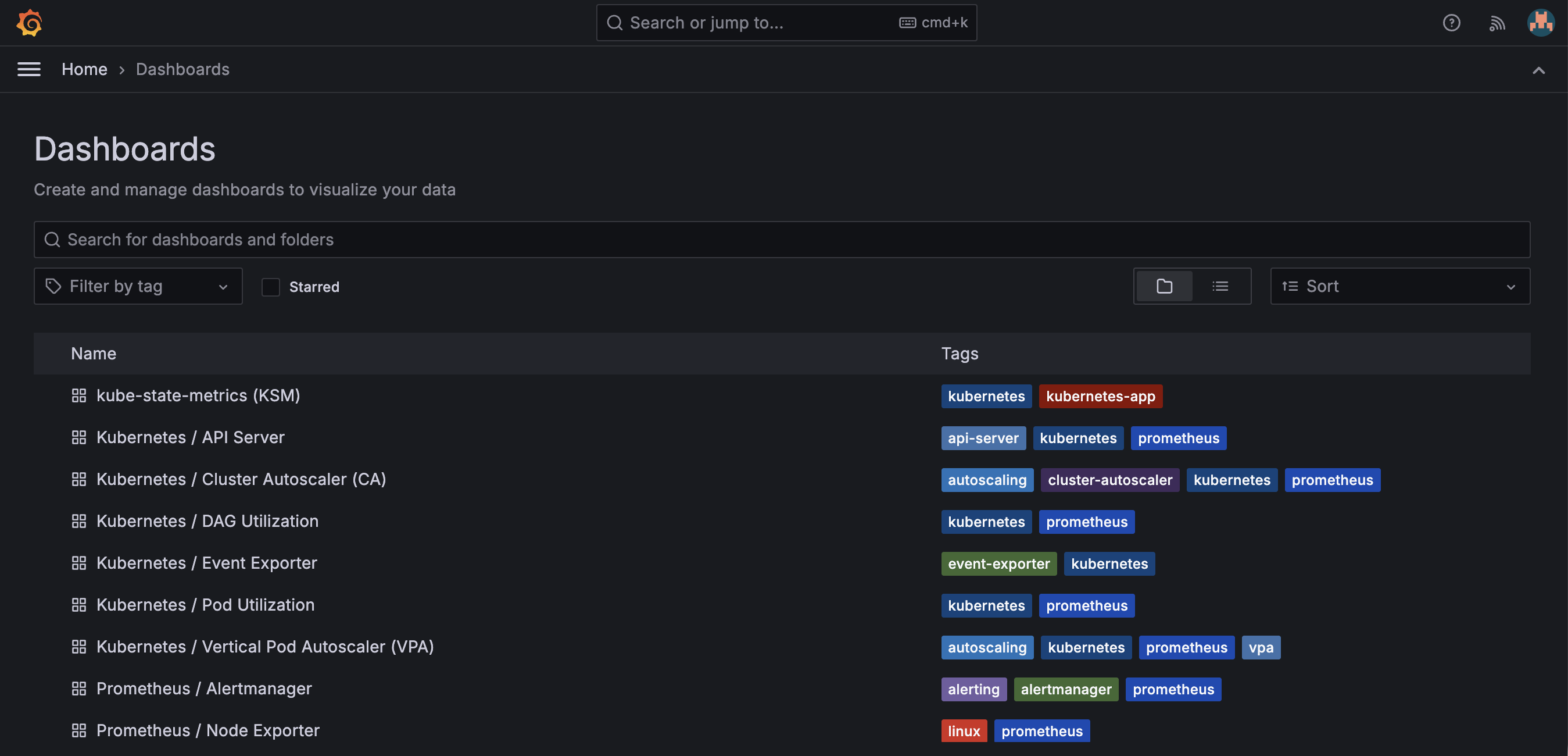

Grafana provides the human interface to the data. It connects to both Prometheus and Alertmanager, making it possible to see metric trends and active alerts in the same place.

-

Airflow dashboards. Dashboards are organized by purpose: some are designed for routine health checks, others for digging into a specific problem. Two categories cover most use cases:

- Operational monitoring: The top-level dashboard is designed for at-a-glance health checks: scheduler state, task throughput, failure rates, and executor utilization on one screen. Engineers can confirm system health in seconds without digging into data.

- Per-DAG drilldown: Designed for investigation. Once an anomaly is detected, it isolates which workflows are affected and where in the execution pipeline the problem is occurring.

-

Infrastructure dashboards. Additional dashboards cover the underlying platform: node resources, Kubernetes object state, cluster events, and API server health. These are not primarily Airflow dashboards, but they are essential for diagnosing whether a pipeline problem is an application issue or an infrastructure issue. Having both in the same tool removes a switching cost during incident investigation.

-

Dashboards as code. Dashboards are provisioned as Kubernetes ConfigMaps, not built interactively in the Grafana UI. This means they are version controlled, reviewed like any other configuration change, and automatically consistent across environments. A dashboard changed during an incident can be reviewed afterward. A dashboard broken during a change can be rolled back.

Operational Considerations#

A monitoring system needs to be more reliable than the system it monitors. Three design decisions address this directly.

-

Scheduling priority. Prometheus runs at a higher scheduling priority than Airflow workers. Under resource pressure, Kubernetes evicts lower-priority workloads first, so the monitoring system is the last thing to be evicted. It remains available during the incidents it is meant to detect.

-

Dedicated node group. Monitoring components run on a separate node group from Airflow workers. A surge in parallel task execution cannot starve monitoring pods of CPU or memory. The infrastructure running the monitoring system is isolated from the infrastructure being monitored.

-

Live configuration reloads. Alert rule updates and scrape target additions take effect without restarting Prometheus. During an incident, you can add a temporary scrape target or adjust a threshold without losing in-flight time-series data.

Future Improvements#

-

Cross-cluster visibility. The current design is single-cluster, so comparing the health of multiple environments requires switching between them. Prometheus federation addresses this: a central instance pulls a curated subset of metrics from each cluster-level instance, enabling cross-environment dashboards and alerting without full metric replication.

-

Long-term retention. The current retention window covers incident investigation and short-term trend analysis. Capacity planning and multi-month trends require a longer history. Thanos and Grafana Mimir both extend Prometheus with object-storage-backed retention: older data moves to S3 automatically and remains queryable from Grafana without changing the rest of the stack.

-

Distributed tracing. Metrics show aggregate behavior: they tell you something was slow or failed more than expected, but not the execution path of a specific pipeline run. For Airflow, tracing would mean capturing the start and end of each task within a DAG run and any external calls made, making it possible to pinpoint exactly where time was lost. Grafana Tempo would add this capability without introducing a separate visualization tool.

Closing Thoughts#

The failure modes that matter most in Airflow on Kubernetes are invisible to standard infrastructure monitoring. A scheduler that has stopped dispatching work, a DAG file that has silently failed to parse, an executor that has reached capacity. None of these produce a Kubernetes event or a container restart. None of them trigger Airflow's own notifications, because those notifications require the scheduler to be running.

Closing that gap requires an external monitoring system that observes Airflow's internal state independently. The stack described here does that systematically, with each component owning a distinct responsibility and clear interfaces between them.

The result is a system where your team learns about pipeline failures in minutes, with enough context to understand whether the problem is in the application, the platform, or somewhere in between.